I’ve always disliked writing test strategies and plans. Reviewing them was even worse. Just tedious long documents that tell me very little. Usually almost a copy paste as projects tend to be pretty similar. I did play with the one pager but still, it felt like a pointless exercise. We had a ways of working that incorporated testing.

In fact, inspired by Robbie Falck, I did our test strategy as a ways of working. That was well received by the teams but there was a push from the business to have documented test strategies per epic.

I ended up taking inspiration from the one pager and organised a meeting with the team and we filled it in. I then carried and mixed it up. Eventually I finally started seeing the value. It wasn’t the document. It was still as pointless as ever. The value was in the conversations we had, the risks identified and the outcomes of the discussions about what we’ll need to do.

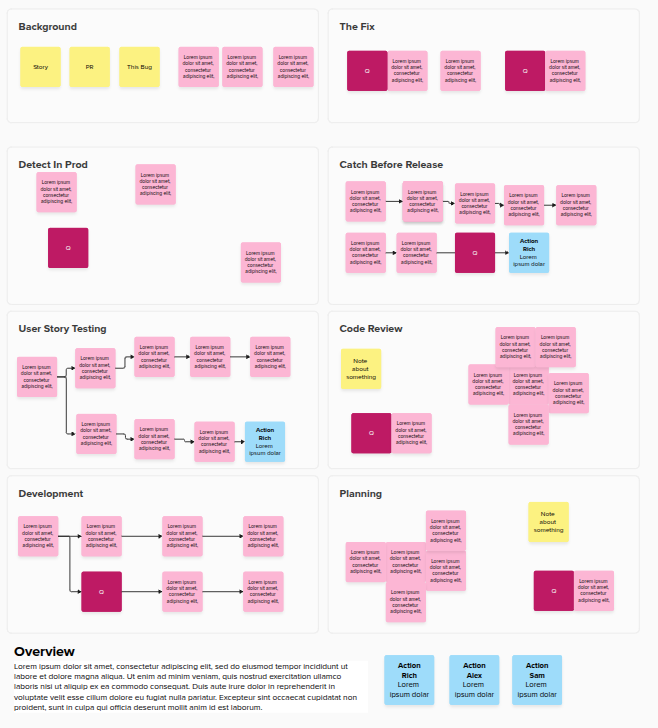

I liked doing this in phases. In our first session we typically started from a diagram of the system. What are we changing? What is impacted? What is the technology? (although later in my time I started by asking… what is the problem we’re solving). I’d also try and get a feel for what we knew and didn’t know. We’d ask about API changes – do we need to do threat modelling? Finally if there’s barriers to testing the feature (kit, environment etc), let’s highlight those early.

I could then catch up with the team, or a couple of folk, again and ask what new have we learnt? What possible risks are new and what have we progressed on potential risks from our first chat. This is again focused in a collaborative way. We should know even more about the architecture so now I can tap into performance & load testing as well.

Whilst I evolved my templates for facilitating, I did explore different methods. It depended on the feature and our knowledge. I loved a diagram but having a series of prompts to ask questions or a mind map of SFDIPOT, it varied. This was to try and get us asking some slightly different questions and keep things fresh. The point is the discussion, not filling in a form, which leads to copy-paste strategies.

In terms of planning *how* to test everything, we focus that per story. If we identify dedicated testing activities, they are their own story. We shouldn’t need a document saying that we’ll do performance tests and unit tests. They are part of the definition of done or acceptable criteria.

So I’m happy to do away with the test strategy documents. They are still worthless in my view. However facilitating discussions involving the various team members to identify the risks and challenges we’ll face. Then documenting the testing needs through the usual tickets.

At the end of the day, if we’re trying to shift left then why have distinct documents about testing. Instead, yes let’s talk about the testing and intertwine that with what is required to close a story.